How the hell are GPUs so fast? A HPC walk along Nvidia CUDA-GPU architectures. From zero to nowadays. | by Adrian PD | Towards Data Science

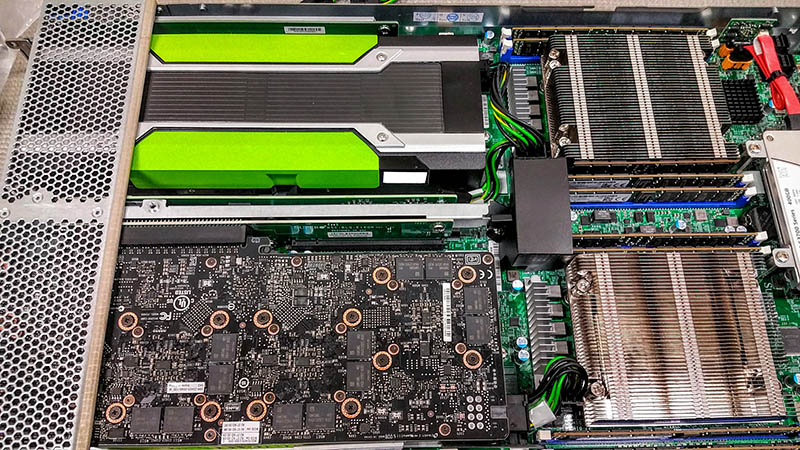

NVIDIA Multi GPU CUDA Workstation PC | Recommended hardware | Customize and Buy the Best Multi GPU Workstation Computers

NVIDIA Multi GPU CUDA Workstation PC | Recommended hardware | Customize and Buy the Best Multi GPU Workstation Computers

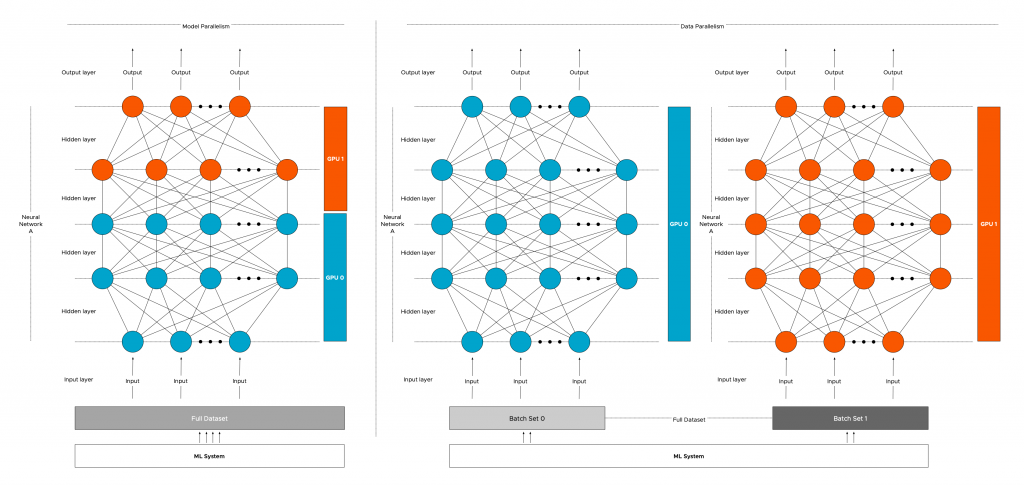

![Multi GPU Programming with MPI and OpenACC [15] | Download Scientific Diagram Multi GPU Programming with MPI and OpenACC [15] | Download Scientific Diagram](https://www.researchgate.net/publication/332087606/figure/fig1/AS:741965318606848@1553909725334/Multi-GPU-Programming-with-MPI-and-OpenACC-15.jpg)